AI but draw me a map

ChatGPT can dress up as South Carolina, but can it find it on a map?

If you ask ChatGPT to generate a photo of someone dressed like the state of Oklahoma, it will do a pretty decent job. But if you ask it to shade in South Carolina on a U.S. map? That’s harder.

Pam, a You & AI participant, recently asked ChatGPT to color code a US map for her.

Here is her prompt, and what she got back:

ChatGPT’s attempt to identify specific states on a US map

At least it’s a map of the US?

Why it didn’t work

Notice that ‘Image created’ tag on Pam’s screengrab? That is a sign that ChatGPT is using a model trained to generate pictures from text, not one that can follow precise visual instructions. This sort of generative AI is not very good at tasks where there’s only one way to do them correctly.

In fact, creating an image of people dressed up as those five states is an easier task for generative AI than shading in states, even though it feels like the harder task to humans. But there are a lot of ways to make someone look like they are representing South Carolina and only one way to shade in the state of South Carolina.

ChatGPT generated image of people dressed as specific US states

So how do you get ChatGPT to shade in states?

You need to activate ChatGPT’s programming capabilities, not its artistic ones.

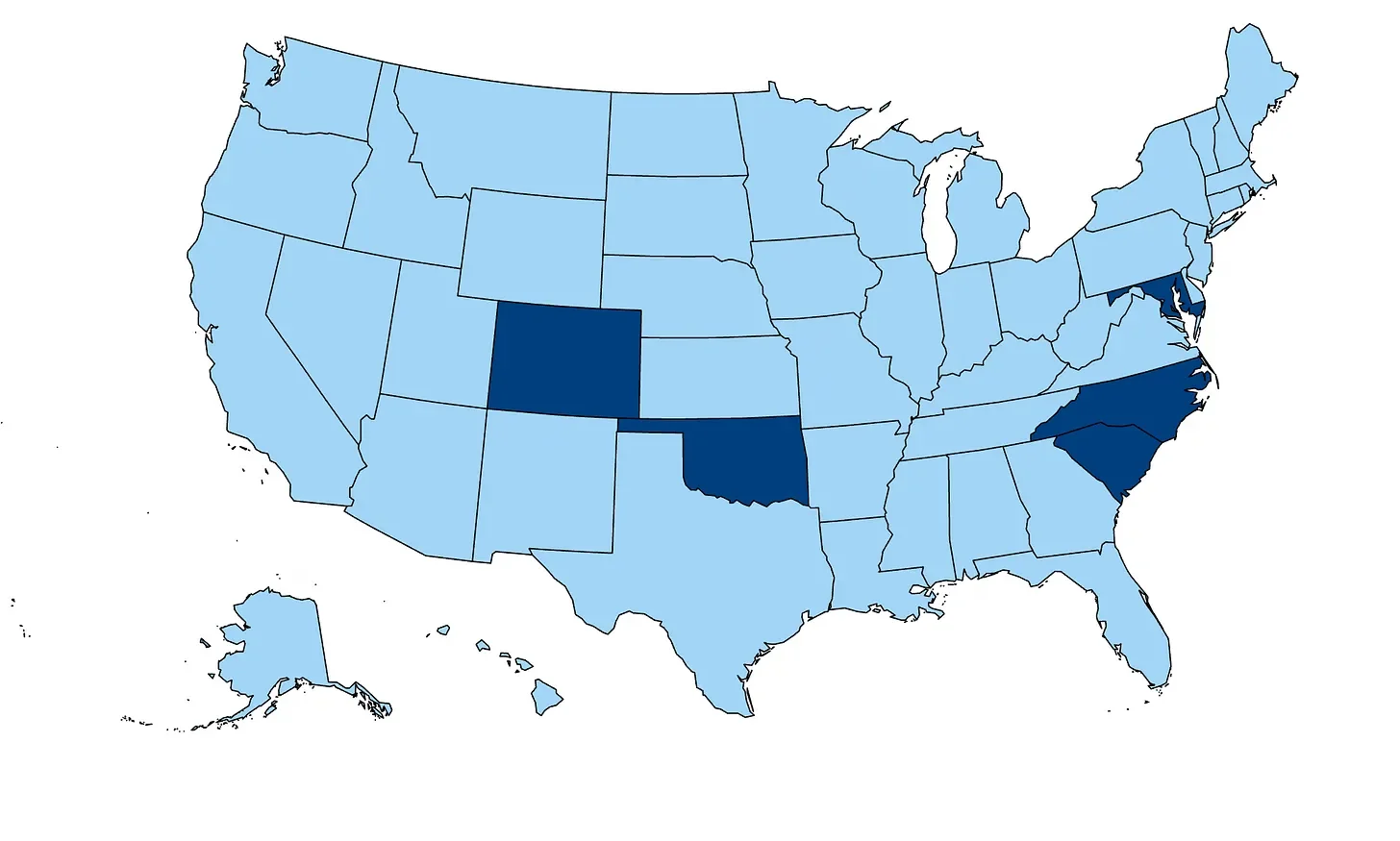

The key is attaching a Scalable Vector Graphics (.svg) file of the United States to your prompt. For most maps, a quick Google search will turn up a usable .svg file. Unlike a pixel-based image, an .svg gives ChatGPT structured data it can work with, enabling it to identify states by ID and change their fill color programmatically.

The result? A perfectly color-coded map:

ChatGPT generated image using an SVG image to correctly identify states

The process ChatGPT used to shade the map is an example of hybrid routing: the generative model acted as a language interface to activate a different kind of computing capability to complete the task. You get the best of both worlds, language for ease of interaction and structured logic for precision.

For those of you in the current You & AI cohort, we’ll dive into more hybrid routing examples in this week’s sessions.

When AI is wrong, it’s not always about the model’s intelligence or the wording of the prompt, sometimes it’s about the tools.

The original prompt failed not because the idea was too complex, but because the wrong model was in the driver’s seat. The trick isn’t to prompt more carefully, it’s to activate the capability that can actually complete the task.

Coauthored index: 90% Sarah | 10% AI